The Accountability Gap: Why PMs Struggle to Own AI Outcomes

The uncomfortable moment

Every AI product eventually reaches the same moment. A user gets a wrong answer. A decision looks questionable. An edge case slips through. It is rarely catastrophic, but it is not acceptable either. And in that moment, the same question appears every time: who owns this?

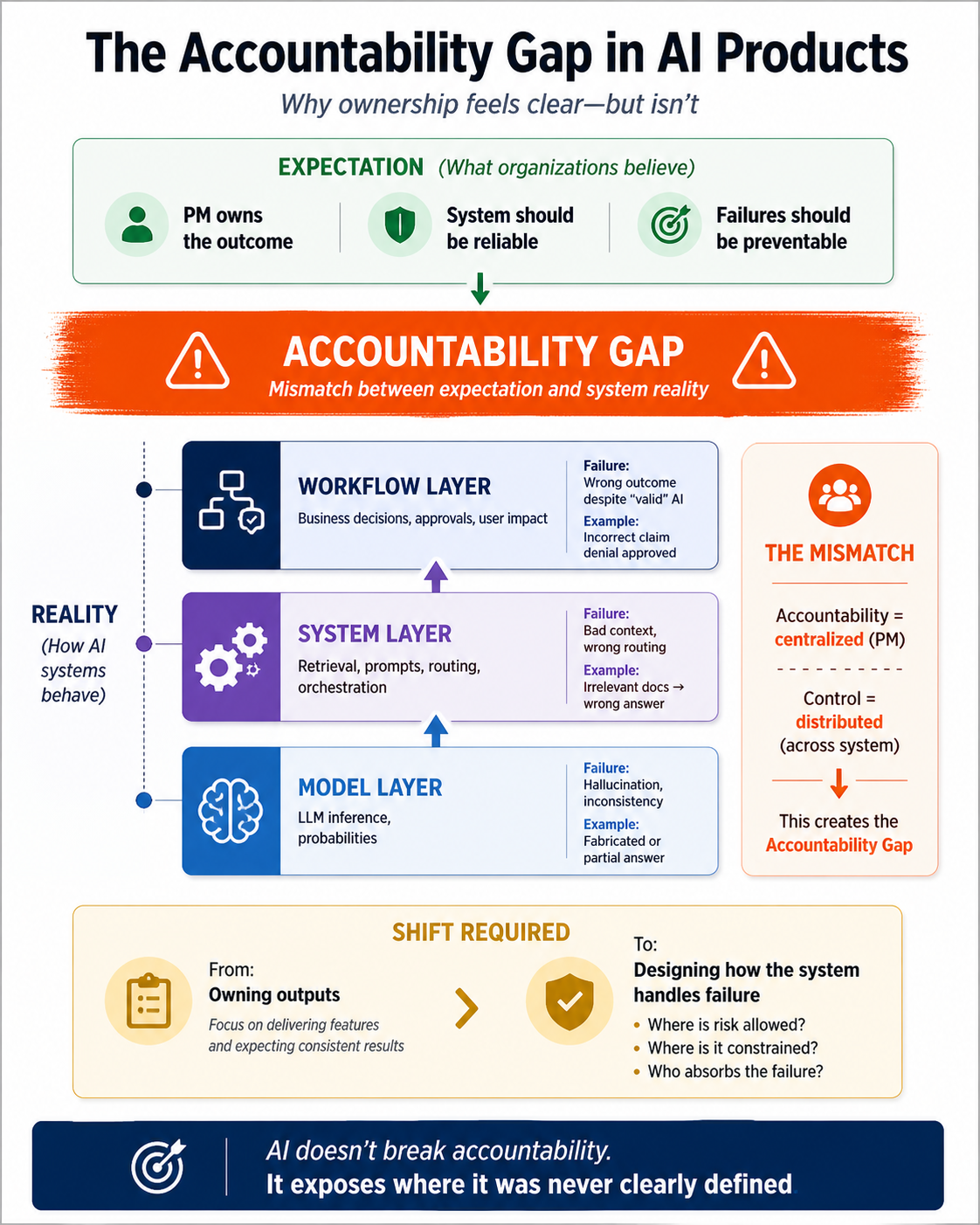

In traditional software, the answer is usually clear. In AI systems, it is not. That ambiguity is what creates the accountability gap.

The old model of ownership

Product management was built on a simple assumption: if you define the requirement clearly enough, the system will behave predictably. That assumption shaped how teams worked. Tickets had acceptance criteria. Test cases were binary. “Done” meant the feature either worked or it did not. When something failed, the team traced the issue, fixed it, and closed the loop. Ownership meant control. If the system behaved incorrectly, it was because something in the system was wrong—and therefore fixable.

Why AI breaks the model

AI systems do not behave that way. The same input can produce different outputs—sometimes better, sometimes worse, occasionally unacceptable. A support chatbot might answer one refund question perfectly and give a vague or incorrect answer to another user asking the same thing slightly differently. Nothing in the code changed. Nothing in the ticket changed. But the outcome did. That is the fundamental shift. The product is no longer fully deterministic. You can improve accuracy and reduce error rates, but you cannot guarantee consistency in the way traditional software allowed. Once that guarantee disappears, the old definition of ownership starts to break down. PMs are still held accountable for outcomes, but they no longer fully control the mechanism that produces them. That tension is not a tooling issue. It is a mismatch between expectation and system reality.

The human cost

This gap shows up psychologically before it shows up operationally. PMs are expected to stand behind outcomes that are inherently variable, and over time that creates real strain. There is guilt when something goes wrong and the root cause is not obvious. There is overconfidence when early success creates the illusion that the system is solved. And there is false certainty when teams are forced to label behavior as either working or not working, even when the truth sits somewhere in between. You see it most clearly in meetings. An executive asks, “Can we guarantee this won’t happen again?” The honest answer is usually no, but few people want to say that out loud. So teams either overcommit or deflect, and neither response builds trust.

Where the model gets blamed—and shouldn’t

Most visible failures are attributed to the AI, because AI is the new capability the business is asking to perform the work. When the user sees a wrong answer, a bad recommendation, or an odd decision, the natural assumption is that the AI failed. That is not always technically precise, but it is understandable. In practice, the AI is the thing people experience as the product. So even when the root cause includes retrieval, workflow, routing, or validation, the model tends to absorb the blame because it is the center of attention. That is part of the reality PMs have to manage. In AI products, the model is not just one component in the stack. It is often the visible face of the system, which means it becomes the first place people look when something goes wrong.

What PMs must define explicitly

Jira is a scribing tool. It records the work and the requirements, but it does not create the product definition for you. That is the PM’s job. AI forces PMs to be more explicit about what gets written into the story in the first place. The story has to capture probabilistic behavior: thresholds, fallback paths, exception handling, monitoring signals, escalation rules, and clear boundaries around what the system must not do. In AI, the story is not just a description of a feature. It is a definition of how the product should behave under uncertainty. That is the real shift. The challenge is not the tool. The challenge is the quality of the PM’s thinking. If the PM understands the probabilistic nature of the feature, Jira can capture it. If the PM does not, no tool will recover that gap.

Agile still matters

Agile is still relevant here because it gives the team the cadence to learn from production behavior and adapt over time. AI products are not just built; they are continuously calibrated. Prompts change, thresholds shift, retrieval improves, and workflows evolve based on what actually happens in production. Agile gives the team the rhythm to inspect that behavior, make adjustments, and keep improving the system as the model reveals more of its real-world patterns. The point is not that Agile solves uncertainty. The point is that it gives the team a structure for iterating on it.

The role-level trap

This leaves PMs in an uncomfortable position. They can overclaim ownership and promise reliability the system cannot guarantee, or they can underclaim ownership and appear evasive. Both are flawed responses. The issue is not whether PMs own outcomes. It is that ownership has changed shape. PMs still own the outcome, but not in the deterministic sense. They own how uncertainty is handled, where risk is absorbed, and how failure is contained. What looks like an architecture decision is increasingly a product decision. Deciding whether the model should act at all, whether outputs should be validated, or whether a workflow should fall back to a human are not implementation details. They define trust, operational risk, and the cost of failure.

A better definition of accountability

Product managers have always owned outcomes. What has changed is the nature of the system. In AI products, accountability is no longer about guaranteeing correctness. It is about taking responsibility for how the system behaves when correctness cannot be guaranteed. That means defining how much uncertainty the product can tolerate, where that uncertainty is allowed to exist, and how it is contained when it crosses acceptable bounds. It means being explicit about tradeoffs rather than assuming they can be eliminated. The PM may not control every component, but they are accountable for how those components come together to produce an experience. That includes the points where certainty ends and judgment begins.

What better PMs do differently

The shift becomes visible in how strong PMs operate. They distinguish between different kinds of decisions instead of treating all AI outputs the same. A low-stakes suggestion is handled differently from a decision that affects money, compliance, or access. That distinction drives how much trust the system is allowed to place in the model. They define success in terms of tolerance, not perfection. The question is no longer just whether the system is correct, but how often it can be wrong, how wrong it can be, and what happens when it is. They design for containment rather than assuming errors can be eliminated. When the model is uncertain, the system needs a clear response—whether that is escalation, fallback, refusal, or a safer default path. And they make ownership explicit across layers. Model quality, retrieval quality, and workflow validation each have clear responsibilities. The PM owns the decision about how much uncertainty the product is allowed to carry and how that uncertainty is experienced by the user.

Closing the gap

The accountability gap does not close by improving the model alone. It closes when PMs stop trying to guarantee outputs and start designing systems that can absorb failure intelligently. It closes when they make uncertainty explicit in the story, define the right boundaries up front, and use Agile to refine the system as production behavior becomes clearer. The PM has always owned the product outcome, AI or not. What changes is the level of ambiguity inside the product, which means PMs have to be more deliberate about defining uncertainty, boundaries, and fallback behavior. The accountability never moved. The complexity did.

Download the Architecture of Proof Checklist

Ready to implement? Get the definitive checklist for building verifiable AI systems.